Reliability

Reliability is the degree of consistency of a measure. A test will be reliable when it gives he same repeated result under the same conditions.

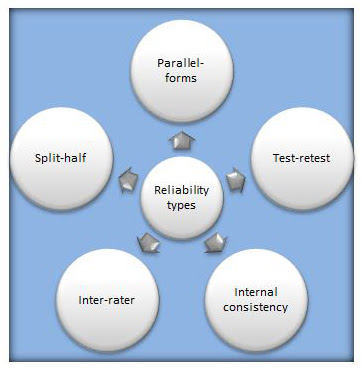

Types of Reliability Tests:

- Test-Retest : The Test - Retest method assess the external consistency of a test. Examples of appropriate tests include questionnaires and psychometric tests. It measures the stability of a test over time.

- Inter-rater Reliability : This refers to the degree to which different raters give consistent estimates of the same behaviour. Inter-rater reliability can be used for interviews.

- Split-half Method : A test for a single knowledge area is split into two parts and then both parts given to one group of students at the same time. The scores from both parts of the tests correlated.

- Parallel forms : It is used to assess the consistency of the results of two tests constructed in the same way from the same content domain.

- Internal Consistency Reliability : If all items on a test measure the same construct or idea, then the test has internal consistency reliability.

Comments

Post a Comment